Ilya Kipnis at QuantStrat TradeR reminds us that the Hidden Markov Model (HMM), which can be a powerful tool for detecting regime change in markets and macro, has its limitations and pitfalls. In particular, Kipnis reports that HMM’s value as a prediction tool for the stock market is dubious. That’s not surprising. Predicting, after all, is always difficult bordering on impossible. But all’s not lost. HMM is still quite useful as a framework for providing relatively objective and reliable signals on the current regime state for equities. A solid forecast would be preferable, of course, but mere mortals have to take what they can get.

Regular readers of The Capital Spectator know that HMM analytics on the S&P 500 make semi-regular appearances on these pages—see here, here, and here, for some examples. After reading QuantStrat TradeR post, I thought I’d share a few thoughts on how I use HMM, including some comments on where the model reaches its limits.

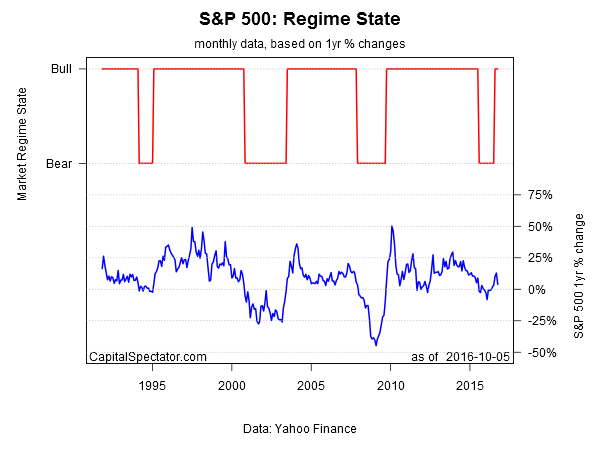

Let’s start with an update of where we stand at the moment, based on S&P data through yesterday (Oct. 5). The analytics are run with the depmixS4 package in R, matching the procedure used by Kipnis. As you can see below, the HMM-estimated bear-market probability has fallen sharply in the last few months.

The slide changed the regime state to bull in August, ending a 12-month run of a bear-market regime.

As for the details on how I’m computing the numbers, the main point is that I’m not using HMM to model the future. As Kipnis advises, that route doesn’t look especially productive. But I’m not looking for oracular visions. Rather, my goal is simply to develop a real-time quantitative-based estimate of bull and bear regimes—the so-called posterior probabilities. I’ll be the first to admit that this methodology isn’t flawless, but nothing else is either. But even after considering the caveats, HMM remains a useful supplement for monitoring trends in stocks and other asset classes in an asset allocation framework.

The challenge, as always, is keeping the noise to a minimum, which translates into a tradeoff between favoring timely signals vs. reliable signals. My preference is to use monthly data, with the current month updated daily to reflect the latest prices. This is hardly perfection. The downside, by design, is a bit of a lag in the signal. Using daily data, by contrast, offers the potential for faster responses to current conditions, but at the cost of more noise. I prefer to err on the side of greater reliability by giving up a degree of timeliness.

Note, too, that I prefer to use rolling one-year returns (based on monthly data with the latest daily return for the current month) as an input. By contrast, Kipnis and other researchers typically kick the tires with daily performance. But here, too, I find that giving up some of the timeliness aspect in exchange for a higher level of reliability via one-year performance is worthwhile. That may not suit traders, but I’m mainly focused on asset allocation in various tactical and strategic applications.

The ability to model more than two regime states is another possibility, but this gets too messy for my tastes. As such, I prefer to keep things simple and focus on a two-state model, i.e., bull or bear.

Note, too, the possibility of using HMM in a multi-factor framework. For instance, Eran Raviv reviewed the results of modeling volatility (the VIX Index) and the S&P 500 in an HMM framework, albeit with daily returns. The results weren’t terribly encouraging.

In fact, I’ve tested multi-factor combinations with HMM with one-year changes, but the results are mixed at best. Nonetheless, this is an ongoing research project.

Overall, I’ve found that a simple two-state HMM via monthly data has merit as a risk-management tool for stocks and other asset classes. I don’t’ use it in isolation, but as compliment with other metrics HMM has attractive features for modeling the present. Using it to predict the future, by contrast, is probably the equivalent of trying to get blood out of a stone.

Pingback: Quantocracy's Daily Wrap for 10/06/2016 | Quantocracy

Pingback: Modeling Stock-Market Regime Shift… Carefully And Selectively - TradingGods.net